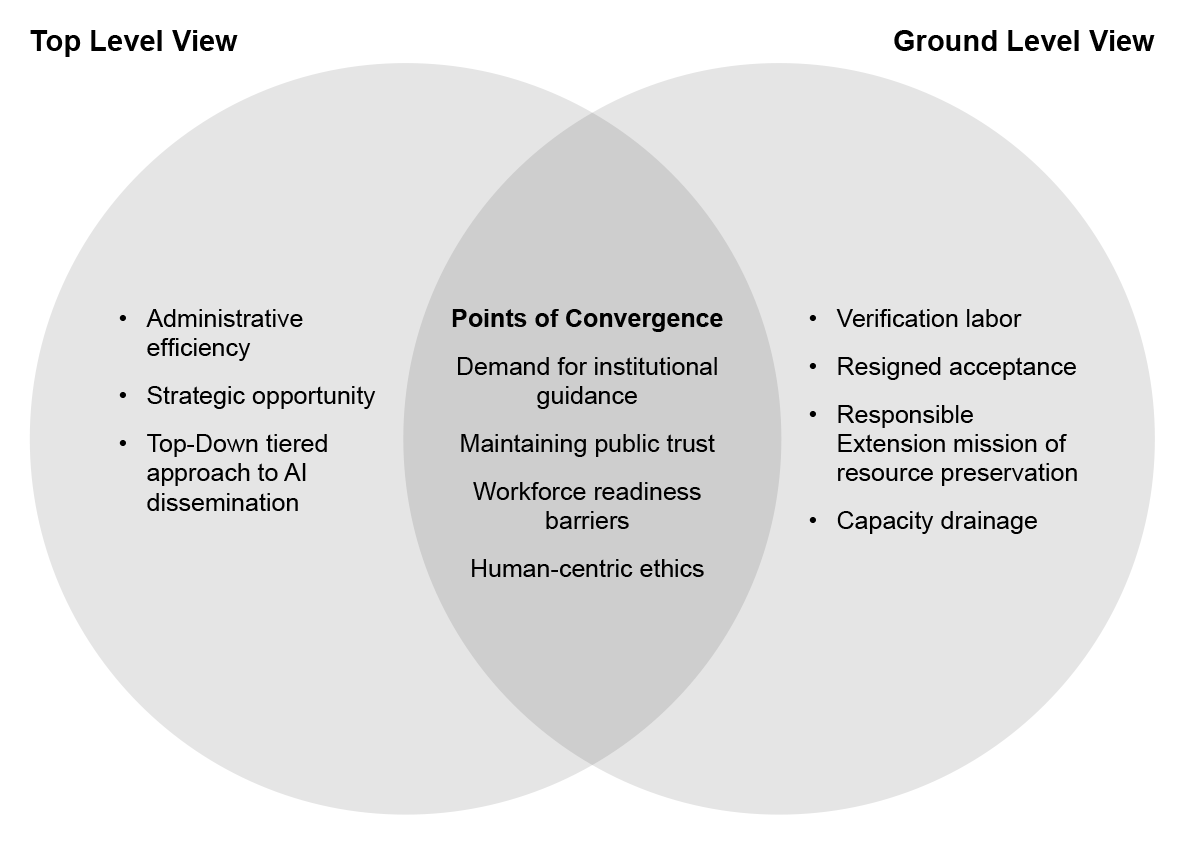

The AI Convening demonstrated that both Cooperative Extension and agInnovation leaders recognize AI as a defining factor in the future of research, education, and outreach across the Land-grant system. Participants viewed AI not as a single tool, but as an ecosystem-level transformation affecting how information is created, validated, shared, and applied. The discussions revealed broad enthusiasm and optimism about AI’s potential, while subsequent workforce-level findings reveal a more complex operational reality defined by uncertainty, capacity strain, and ethical tension. The system’s success will depend on coordinated readiness, ethical governance, and investment in people and infrastructure. The convening sessions offered a clear message: both Extension and agInnovation are poised to take further steps in shaping responsible, mission-driven uses of AI, but doing so will require shared standards, structured collaboration, and a national vision that bridges leadership strategy with on-the-ground implementation.

Emerging Readiness and Distributed Innovation

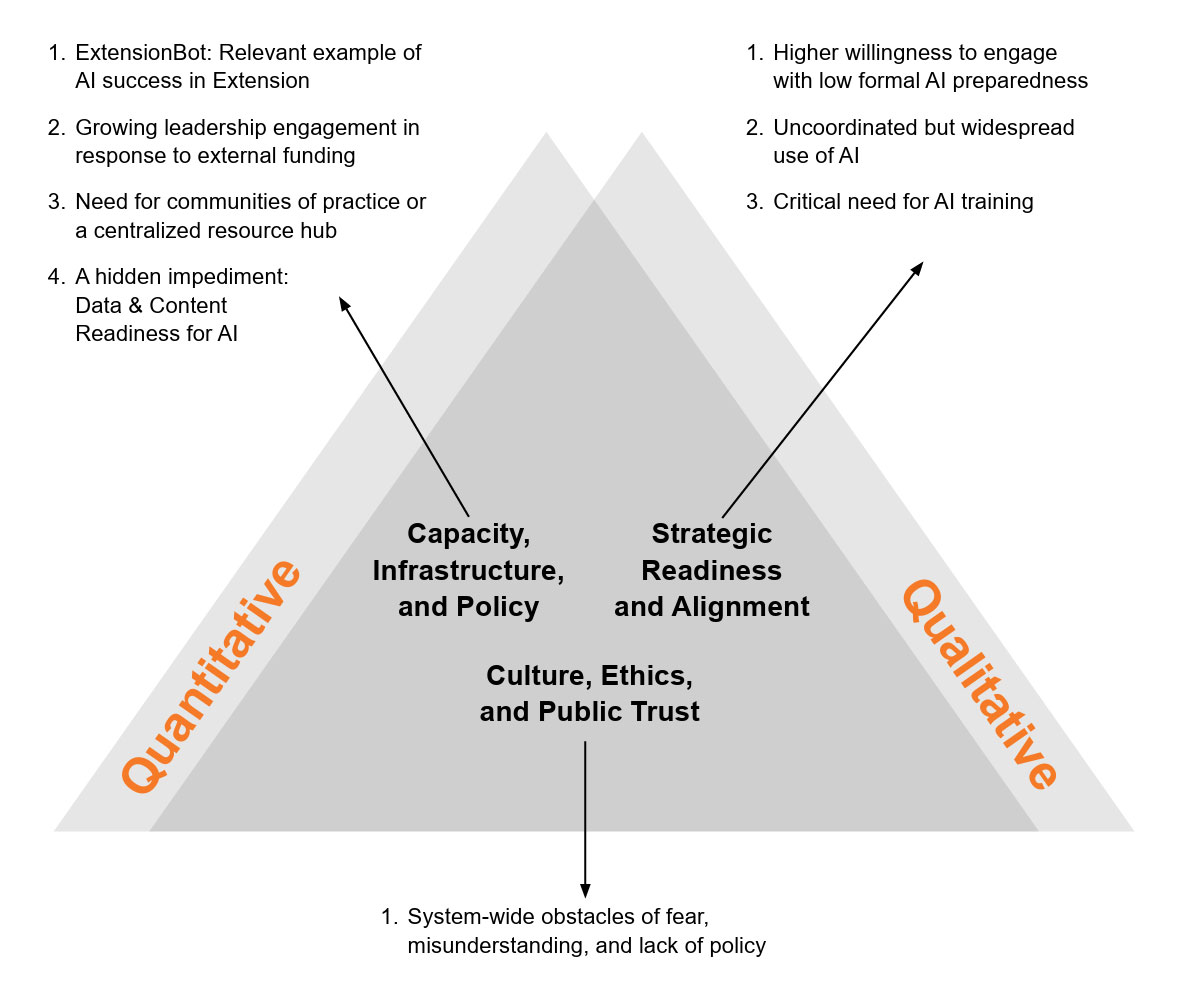

AI experimentation is already occurring across the Land-grant system. Faculty, researchers, and educators are independently piloting AI tools for literature synthesis, data analysis, stakeholder communication, and decision support. This decentralized experimentation has generated valuable insights, yet leaders acknowledged that progress remains uneven and largely uncoordinated. Workforce findings further indicate that this experimentation is often occurring without sufficient guidance, creating variability in practice and uncertainty around acceptable use.

Participants agreed that the next phase must focus on structured implementation. Both Extension and agInnovation institutions need frameworks that align experimentation with organizational priorities, create consistency across outputs, and promote cross-state learning and sharing. The challenge ahead is to connect local innovation with national coordination, enabling knowledge to scale without losing institutional flexibility while reducing the current state of fragmentation and policy ambiguity.

Workforce Development as the Central Bottleneck

A strong consensus emerged that workforce readiness is the most significant barrier to AI adoption. Both Extension and agInnovation rely on a workforce that blends subject-matter expertise with community engagement and applied research. Yet most professionals have not received structured training in AI literacy, ethics, or application. Workforce perspectives further reveal that AI is frequently perceived not as an opportunity, but as an additional burden layered onto already constrained workloads.

Participants emphasized that a tiered approach to workforce development is needed. Basic digital literacy must be coupled with advanced technical and ethical competencies for specialists, researchers, and educators. This training should not only focus on how to use AI tools, but also on how to critically evaluate AI-generated information, validate data, and communicate transparently with stakeholders. Importantly, readiness cannot be achieved without dedicated time, institutional support, and alignment between expectations and capacity. Without these conditions, training efforts risk limited adoption and uneven impact.

Human-Centered Ethics and Responsible Governance

Both Extension and agInnovation leaders reaffirmed that people must remain at the center of AI integration. Participants were clear that technology should augment human expertise, not replace it. This human-centric approach preserves the credibility, trust, and relational foundation that have defined the Land-grant mission for more than a century.

Leaders identified a strong need for shared ethical frameworks, including policies on attribution, data privacy, intellectual property, and algorithmic fairness. A framework developed collaboratively across the system could help ensure that AI tools are used transparently and in alignment with Land-grant values. Workforce findings reinforce this need, highlighting concerns around “intellectual sovereignty,” where professionals fear a shift from knowledge creation to verification of AI-generated content. Addressing this requires governance structures that preserve the visibility of human expertise, authorship, and scientific rigor.

Additionally, trust emerged as a central theme across both datasets. Trust is not viewed as an inherent feature of AI systems, but as a function of the transparency, accountability, and academic integrity of Extension professionals. Ensuring that AI-enabled outputs remain grounded in university-verified, research-based content will be essential to maintaining Extension’s role as a trusted public resource.

Infrastructure, Policy, and Data Readiness

Infrastructure disparities emerged as a major concern. While some institutions have advanced data systems and internal AI policies, others are still developing the foundational digital and policy environments necessary for integration. Participants noted that AI readiness requires not only technical infrastructure such as broadband, storage, and computing capacity, but also structured, available, and validated data.

Shared data repositories, consistent metadata standards, and aligned policies on availability and governance were identified as key enablers for cross-institutional collaboration. Additionally, with concerns around data privacy and data security, institutions may consider training or educational best practices for those building and using AI applications. These investments would allow Extension and agInnovation to more effectively connect research outputs with educational and applied use cases, creating a unified ecosystem of reliable, AI-ready information.

Workforce findings further emphasize that gaps in policy clarity and access to approved tools contribute to a broader sense of institutional ambiguity, where professionals are expected to manage risk without clear guidance. Addressing these gaps will be essential for enabling consistent and confident adoption across the system.

Implications for the Land-Grant System

The findings from the convening underscore that AI readiness represents both a challenge and an opportunity for Cooperative Extension and agInnovation. The decentralized nature of the Land-grant system remains a double-edged sword: it encourages innovation and adaptation, but can also lead to fragmentation if not intentionally aligned. The addition of workforce perspectives makes clear that this fragmentation is already being felt at the operational level, where professionals are navigating unclear expectations and uneven support structures.

To sustain national leadership in research and community engagement, Extension and agInnovation must advance in tandem. AI has the potential to accelerate the translation of research into practice, improve decisionmaking, and expand access to knowledge for all communities. Achieving this potential will depend on shared investment in training, infrastructure, policy development, and data stewardship.

At the same time, the system must reconcile emerging ethical tensions, including the environmental impact of AI technologies and their alignment with Extension’s mission of sustainability and resource stewardship. Addressing this dimension will require intentional decision-making around tool selection, usage, and long-term impact.

Ultimately, the path forward is not defined by adoption alone, but by intentional, values-aligned integration. The Land-grant system is uniquely positioned to lead in this space, not only by implementing AI, but by shaping how it is applied in the service of public good, scientific integrity, and community trust.